1. Introduction to Agentic AI and Language Models

Why Small Language Models Are the Future of Agentic AI

- What Agentic AI is and how it works

- Difference between Large Language Models (LLMs) and Small Language Models (SLMs)

- Why this shift is important now

2.The Limitations of Large Language Models in Agentic Systems

- Cost, latency, and energy challenges

- Lack of predictability and control

- Scalability issues in real-world applications

3. Why Small Language Models Are Gaining Momentum

- Efficiency and lower resource requirements

- Better control and structured outputs

- Rise of specialized, task-focused models

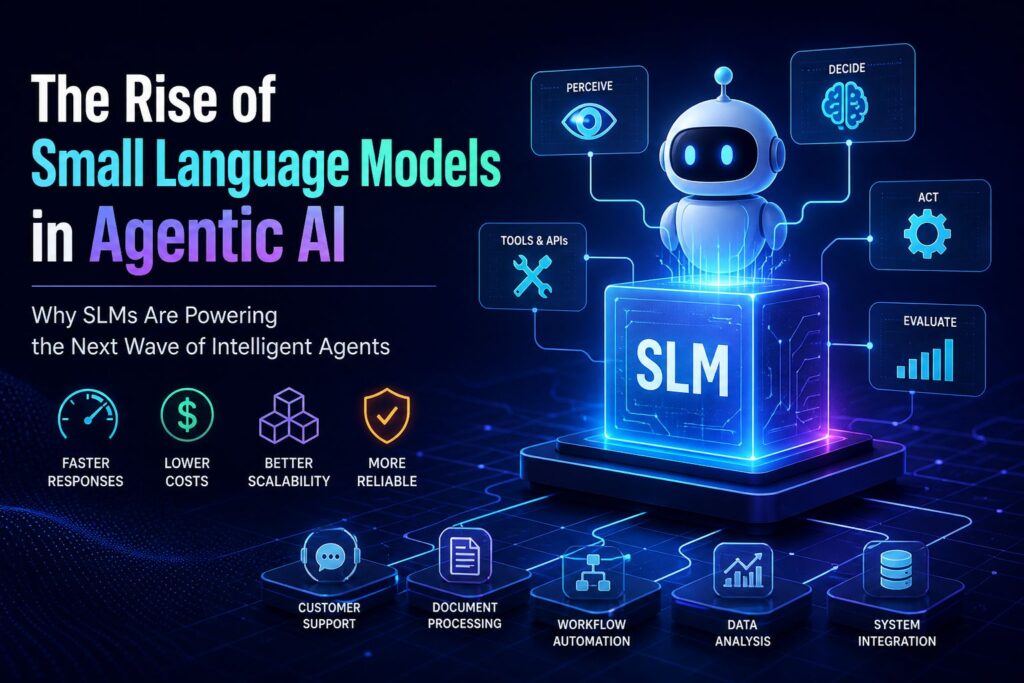

4. Key Advantages of SLMs in Agentic AI

- Faster response times and reduced costs

- Domain-specific expertise and fine-tuning

- Improved reliability and alignment

- Flexibility in multi-model (heterogeneous) systems

5. How SLM-Based Agents Work in Practice

- Role of SLMs inside agent architectures

- Combining multiple small models for complex tasks

- Integration with tools, APIs, and workflows

6. Real-World Applications of SLMs in Agentic Systems

- Business automation and enterprise use cases

- Industry-specific deployments

- Department-level AI agents (e.g., support, operations, IT)

7. Research and Innovations Driving SLM Growth

- Advances in compact model design

- New evaluation methods for AI agents

- Trends like model compression and efficient inference

8. Transitioning from LLMs to SLMs

- Practical migration strategies

- Hybrid approaches (LLM + SLM systems)

- Implementation challenges and how to overcome them

9. Challenges and When SLMs May Not Be Ideal

- Complex reasoning limitations

- Data dependency and training constraints

- Scenarios where large models still outperform

10. The Future of Agentic AI with Small Language Models

- Long-term industry trends

- Democratization of AI development

- Final assessment: why smaller, smarter models may win

Introduction to Agentic AI and Language Models

Artificial Intelligence is shifting fast. It’s no longer just about chatbots or generating text. The focus is now on Agentic AI—systems that can think, decide, and take actions on their own.

At the center of this shift are language models. For years, Large Language Models (LLMs) dominated the space. They are powerful, but they come with high costs, slow responses, and heavy resource demands. This makes them harder to scale in real-world systems.

Now, a new approach is gaining attention—Small Language Models (SLMs). These models are lighter, faster, and more focused. Instead of doing everything, they are designed to do specific tasks extremely well.

This change is not just a technical upgrade. It’s a strategic shift. In Agentic AI, efficiency, control, and reliability matter more than raw size.

So, are bigger models really better? Or is the future built on smaller, smarter systems?

Let’s explore why Small Language Models are becoming the backbone of Agentic AI.

2. The Limitations of Large Language Models in Agentic Systems

Large Language Models (LLMs) brought a major breakthrough in AI. They can write, reason, summarize, and even code. But when we move from simple chat use to Agentic AI systems, their weaknesses become much more visible.

Agentic AI is not just about generating text. It requires planning, taking actions, using tools, and making decisions in real time. This is where LLMs start to struggle.

High cost and resource demands

LLMs are expensive to run. They require powerful GPUs and large memory systems. Every request consumes significant compute.

In agent-based systems, this becomes a serious issue. Agents often need multiple steps to complete one task. If each step relies on a large model, the cost multiplies quickly. This makes real-time and large-scale deployment difficult.

Latency slows down decision-making

Agentic AI systems are expected to act quickly. But LLMs are not always fast.

Because of their size, they take longer to process inputs and generate outputs. In multi-step workflows, this delay adds up. The result is slower agents that feel less responsive and less practical for real-world automation.

Lack of predictability and control

One of the biggest challenges is inconsistency. LLMs can produce different outputs for similar inputs. While this creativity is useful in content generation, it becomes a problem in agent systems.

Agents need reliable and structured outputs to take correct actions. Small variations in responses can break workflows, cause errors, or lead to unintended behavior.

Difficulty in task specialization

LLMs are general-purpose by design. They are trained to handle a wide variety of topics, not specific workflows.

But agentic systems often need narrow, focused intelligence—like handling customer support tickets, managing APIs, or analyzing financial data. LLMs are often “too broad” for these specialized roles.

Scaling problems in multi-agent environments

In advanced systems, multiple AI agents work together. Each agent may need to communicate, coordinate, or delegate tasks.

Using LLMs for every agent creates a bottleneck. It increases cost, reduces speed, and makes the system harder to optimize. As complexity grows, scalability becomes a major concern.

Energy and infrastructure limitations

Beyond performance, LLMs also have an environmental and infrastructure impact. Running them at scale requires significant energy consumption and cloud resources.

For companies building long-term AI systems, this becomes a sustainability and cost-efficiency issue.

Summary

LLMs are powerful, but not always practical for agentic systems. Their size, cost, and unpredictability create friction in real-world applications.

This gap is exactly what is pushing the industry toward a new direction—smaller, faster, and more efficient language models designed specifically for agents.

3. Why Small Language Models Are Gaining Momentum

As AI systems move from experimental tools to real-world agents, the industry is rethinking what actually matters most. It is no longer just about having the most powerful model. It is about building systems that are fast, stable, and cost-efficient.

This is where Small Language Models (SLMs) are starting to take center stage.

Efficiency becomes a top priority

SLMs are designed to be lightweight. They require fewer computational resources compared to large models.

This makes them ideal for agentic systems where multiple steps and decisions happen continuously. Instead of spending heavy compute on every action, SLMs allow systems to operate smoothly at scale.

The result is simple: lower cost, faster execution, and easier deployment.

Speed matters more in agent workflows

Agentic AI is not a single-response system. It works in loops—observe, decide, act, and repeat.

In this cycle, speed is critical. Even small delays can break the flow of automation.

SLMs respond faster because they are smaller and more optimized. This allows agents to make decisions in real time, improving overall system performance and responsiveness.

Specialization over generalization

Unlike large models that try to handle everything, SLMs are often trained or fine-tuned for specific tasks or domains.

This specialization makes them more accurate in focused environments. For example:

- Customer support automation

- Data extraction and structuring

- Workflow routing and decision-making

- API-based task execution

In agentic systems, this narrow focus is an advantage, not a limitation.

Better control and predictability

One of the biggest reasons SLMs are gaining attention is control.

Smaller models tend to behave more consistently. Their outputs are easier to constrain, structure, and guide.

For agentic AI, this is extremely important. Agents must follow rules, produce structured outputs, and trigger actions without ambiguity. SLMs make this far more reliable compared to large, less predictable models.

Rise of multi-model systems

Modern AI systems are no longer built on a single model. Instead, they use multiple specialized models working together.

In this architecture:

- SLMs handle routine, repetitive tasks

- Larger models handle complex reasoning when needed

- Routing systems decide which model to use

This hybrid approach is becoming the standard in Agentic AI design.

Hardware and deployment flexibility

SLMs can run on a wider range of environments:

- Edge devices

- On-premise servers

- Lightweight cloud instances

- Even local systems in some cases

This flexibility is important for industries that need privacy, low latency, or offline capabilities.

Summary

Small Language Models are not just a simplified version of LLMs. They represent a shift in design philosophy.

Instead of maximizing size and capability, the focus is now on efficiency, specialization, and control.

And as Agentic AI systems become more complex, these qualities are becoming more valuable than raw power.

4. Key Advantages of SLMs in Agentic AI

Small Language Models (SLMs) are becoming a core building block in Agentic AI because they solve practical problems that large models struggle with in production. Their value is not just theoretical—it shows up directly in cost, speed, and system reliability.

Below are the key advantages that make SLMs especially powerful in agent-based architectures.

Faster response times and lower latency

In Agentic AI, speed is not optional. Agents often run continuous loops where every millisecond matters.

SLMs are smaller and require fewer computations per request. This leads to:

- Faster inference

- Lower response delay

- Smoother multi-step execution

When agents need to make decisions in real time—like routing a ticket, calling an API, or updating a workflow—this speed advantage becomes critical.

Significantly lower operational cost

One of the biggest limitations of large models is cost. Every call to a large model consumes significant compute resources.

SLMs reduce this burden dramatically:

- Less GPU usage

- Lower cloud costs

- Reduced energy consumption

In large-scale agent systems, where thousands or millions of decisions happen daily, this cost difference becomes a major factor in architecture decisions.

Better control and structured outputs

Agentic systems depend on predictable outputs. A small formatting error can break a workflow or trigger incorrect actions.

SLMs are easier to:

- Constrain with strict prompts

- Fine-tune for structured output formats (JSON, schemas, rules)

- Control through deterministic behavior tuning

This makes them more reliable for automation-heavy environments where precision matters more than creativity.

Strong domain specialization

Unlike general-purpose LLMs, SLMs can be trained or fine-tuned for narrow, high-value tasks.

This includes:

- Customer support classification

- Document parsing and extraction

- Intent detection in workflows

- Business rule execution

Because they focus on specific tasks, SLMs often outperform larger models in those targeted domains.

Improved scalability in agent ecosystems

Agentic AI systems are rarely built with a single model. They use multiple agents working together in pipelines or networks.

SLMs make this scalable because:

- They can run in parallel without heavy compute load

- They reduce bottlenecks in decision chains

- They allow more agents to operate simultaneously

This enables large-scale orchestration without exponential cost growth.

Heterogeneous architecture support

Modern AI systems are shifting toward mixed-model environments.

In this setup:

- SLMs handle simple, repetitive tasks

- Medium models handle reasoning

- Large models are used only for complex edge cases

SLMs act as the first layer of decision-making, filtering and routing tasks efficiently. This improves the overall system architecture by reducing unnecessary load on larger models.

Better alignment and predictable behavior

One overlooked advantage of SLMs is behavioral stability.

Because they are smaller and more focused:

- They are easier to align with specific rules

- They show fewer unexpected outputs

- They behave more consistently across similar inputs

This predictability is essential when AI agents are connected to real-world systems like finance, healthcare, or enterprise automation.

Summary

The advantages of SLMs are not about raw intelligence—they are about system performance in real environments.

They deliver faster responses, lower costs, better control, and scalable architecture support. In Agentic AI, where reliability and efficiency matter more than size, these advantages make SLMs a foundational technology rather than just a lightweight alternative.

5. How SLM-Based Agents Work in Practice

Small Language Models (SLMs) are not used in isolation inside Agentic AI systems. Instead, they function as specialized components within a larger decision-making architecture. The real power comes from how these small models are orchestrated to act like intelligent agents.

To understand their role, it helps to see how a typical agentic system is structured.

SLMs as task-specific decision units

In Agentic AI, an “agent” is not just a model—it is a system that can:

- Perceive input

- Decide what to do

- Execute an action

- Evaluate the result

SLMs often handle the decision or classification step in this cycle.

For example:

- Identifying user intent

- Selecting the correct tool or API

- Determining the next workflow step

- Structuring input data for downstream systems

Instead of relying on one large model for everything, SLMs act like specialized decision engines inside the agent pipeline.

Multi-step workflows powered by small models

Agentic systems usually operate in multiple steps rather than a single response.

A simplified workflow might look like this:

- User request enters the system

- An SLM classifies the request type

- Another SLM selects the appropriate tool

- A separate module executes the action

- A final model formats or validates the output

Each SLM is responsible for a narrow but important function. This division of labor makes the system faster and more predictable.

Tool use and API-driven intelligence

One of the most important roles of SLMs in agentic systems is tool selection and execution control.

Instead of generating long responses, SLM-based agents often:

- Choose which API to call

- Decide parameters for external tools

- Trigger workflows in business systems

For example, in a customer support system:

- One SLM detects the issue category

- Another SLM routes the ticket to the correct department

- A third SLM generates a structured response template

This makes agents more like automated decision routers than conversational models.

Coordination between multiple SLMs

Advanced systems use several SLMs working together rather than a single model.

This creates a layered architecture:

- Input SLMs → interpret and classify data

- Routing SLMs → decide next steps

- Execution SLMs → prepare structured outputs

- Validation SLMs → check correctness and consistency

This modular design reduces complexity and improves system reliability.

Fallback to larger models when needed

SLM-based systems are often hybrid. Not every task can be handled by small models.

When an SLM encounters:

- Complex reasoning

- Ambiguous queries

- High-risk decisions

It can escalate the task to a larger model.

This creates a smart routing system, where:

- SLMs handle 80–90% of routine tasks

- LLMs handle edge cases and deep reasoning

This balance improves both efficiency and capability.

Integration with external systems

SLM-based agents are rarely standalone. They are tightly integrated with:

- APIs and microservices

- Databases and knowledge systems

- Automation tools (RPA, workflows, schedulers)

Their job is to act as the intelligence layer that connects systems together, not to replace them.

This makes them extremely valuable in enterprise environments where automation must interact with existing infrastructure.

Summary

In practice, SLM-based agents are not simple chat models. They are distributed intelligence components inside a structured system.

They classify, route, decide, and coordinate actions across multiple steps. When combined properly, they enable Agentic AI systems that are faster, cheaper, and more scalable than traditional LLM-only architectures.

6. Real-World Applications of SLMs in Agentic Systems

The real value of Small Language Models (SLMs) becomes clear when they are deployed in production environments. In Agentic AI systems, SLMs are not used for general conversation—they are embedded into workflows where speed, structure, and reliability matter more than broad intelligence.

Across industries, SLM-powered agents are already being used to automate decisions, reduce manual workload, and improve operational efficiency.

Enterprise automation and business workflows

One of the biggest adoption areas for SLM-based agents is enterprise automation.

Companies use them to:

- Route internal requests automatically

- Process documents and extract key information

- Trigger approvals in business workflows

- Generate structured reports from raw data

Instead of relying on humans for repetitive tasks, SLM agents act as workflow decision engines, ensuring processes run smoothly and consistently.

Customer support and service systems

Customer support is a natural fit for SLM-driven agents because most queries fall into predictable categories.

SLMs are used to:

- Classify customer queries (billing, technical, account issues)

- Suggest predefined responses or solutions

- Escalate complex cases to human agents or larger models

- Maintain consistent response formats across channels

This improves response time while reducing operational costs for support teams.

IT operations and system management

In IT environments, speed and accuracy are critical. SLM-based agents help automate routine operational tasks such as:

- Monitoring system logs

- Detecting anomalies

- Triggering alerts or automated fixes

- Routing incidents to appropriate teams

Instead of overwhelming engineers with raw data, SLMs act as intelligent filters and decision layers in IT workflows.

Finance and data processing systems

In financial systems, structured outputs and precision are essential.

SLMs are used for:

- Transaction categorization

- Fraud detection signal classification

- Financial report structuring

- Risk flagging in real time

Their ability to follow strict rules makes them suitable for environments where errors can have high consequences.

E-commerce and recommendation workflows

E-commerce platforms use SLM-based agents to improve both backend operations and customer experience.

They help with:

- Product classification and tagging

- Search query understanding

- Personalized recommendation routing

- Inventory decision support

Instead of relying on heavy models for every request, SLMs ensure fast and efficient decision-making at scale.

Industry-specific agent systems

SLMs are also being deployed in specialized industries where narrow expertise is more important than general intelligence:

- Healthcare: patient data structuring, appointment routing, report summarization

- Legal: document classification, clause extraction, case tagging

- Manufacturing: process monitoring, predictive maintenance alerts

- Logistics: route optimization decisions, shipment tracking automation

In each case, SLMs act as domain-focused intelligence layers that improve operational efficiency.

Summary

Across all these applications, one pattern is clear: SLMs are not replacing entire systems—they are powering the decision-making layers inside them.

By handling classification, routing, and structured outputs, they enable Agentic AI systems to operate faster, cheaper, and more reliably across industries.

7. Research and Innovations Driving SLM Growth

The rise of Small Language Models (SLMs) is not happening by accident. It is the result of focused research efforts aimed at solving the real limitations of large-scale AI systems. Over the past few years, academic labs and industry leaders have shifted attention from “bigger models” to smarter, more efficient architectures.

This shift is now shaping the foundation of modern Agentic AI.

Advances in compact model design

One of the biggest research directions is building models that deliver strong performance with fewer parameters.

Instead of scaling upward, researchers are focusing on:

- Efficient neural architectures

- Parameter sharing techniques

- Distillation from large models into smaller ones

This allows SLMs to retain useful knowledge while dramatically reducing size and compute requirements.

The goal is no longer just intelligence—it is intelligence per unit of compute.

Knowledge distillation and compression techniques

A major innovation driving SLM development is knowledge distillation.

In this approach:

- A large model (teacher) is trained first

- A smaller model (student) learns from its outputs

- The student model replicates performance patterns in a compact form

This method enables SLMs to inherit reasoning ability without inheriting the full computational burden of LLMs.

Other compression techniques include:

- Weight pruning

- Quantization

- Low-rank factorization

These methods make models faster and lighter while preserving essential performance.

Shift toward agent-focused evaluation

Traditional AI research evaluated models based on text quality or benchmark scores. But SLM research is changing this.

Now, models are increasingly evaluated on:

- Task completion ability

- Tool usage accuracy

- Multi-step reasoning in workflows

- Real-world agent performance

This is critical because Agentic AI is not about generating perfect text—it is about completing actions correctly in dynamic systems.

Emergence of test-time efficiency research

Another important innovation is improving how models behave during inference.

Instead of increasing model size, researchers are optimizing:

- How long models think before responding

- How they allocate reasoning steps

- How efficiently they use context windows

This area is often referred to as test-time compute optimization, and it directly supports lightweight SLM deployment in production systems.

Multi-model and modular AI research

Modern research is also moving away from single-model systems.

Instead, there is growing focus on:

- Modular AI architectures

- Routing systems that select the best model per task

- Multi-agent frameworks where small models collaborate

This aligns perfectly with SLM usage in Agentic AI, where different models handle different roles in a pipeline.

Growing open-source ecosystem and industry support

The SLM movement is also being accelerated by open-source development.

Researchers and companies are releasing:

- Lightweight transformer models

- Efficient inference libraries

- Fine-tuning frameworks for small models

This ecosystem allows developers to experiment and deploy SLMs without massive infrastructure costs.

As a result, innovation is no longer limited to large AI labs—it is becoming widely accessible.

Summary

The growth of Small Language Models is being driven by a clear shift in AI research priorities.

Instead of focusing only on scale, the industry is now optimizing for:

- Efficiency

- Practical deployment

- Agent performance

- System-level intelligence

These innovations are laying the groundwork for a new era of AI—where smaller models, working together in structured systems, outperform large monolithic models in real-world applications.

8. Transitioning from LLMs to SLMs

Moving from Large Language Models (LLMs) to Small Language Models (SLMs) is not a simple replacement. It is a system redesign process. Most real-world AI systems today are not fully shifting away from LLMs—they are gradually restructuring workflows to use SLMs where they make the most sense.

This transition is driven by practical needs: cost reduction, faster execution, and better control in Agentic AI systems.

Understanding the hybrid transition approach

In most modern deployments, organizations do not fully abandon LLMs. Instead, they build hybrid architectures:

- LLMs handle complex reasoning, creativity, and edge cases

- SLMs handle routine decisions, classification, and structured tasks

- Routing systems decide which model to use

This creates a layered intelligence system where each model plays a specific role.

The key idea is simple: use LLMs less frequently, but more strategically.

Identifying tasks suitable for SLMs

The first step in transition is analyzing workflows and breaking them into components.

Tasks that are ideal for SLMs include:

- Intent classification

- Data extraction and formatting

- API selection and routing

- Workflow triggering decisions

- Repetitive customer queries

These tasks do not require deep reasoning but demand speed and consistency.

By separating these tasks, organizations can significantly reduce dependency on large models.

Introducing model routing systems

A critical part of transitioning is building intelligent routing layers.

These systems decide:

- When to use an SLM

- When to escalate to an LLM

- When to trigger fallback logic or human intervention

This routing can be based on:

- Input complexity

- Confidence scores

- Business rules

- Risk level of the task

Routing transforms AI systems from single-model pipelines into adaptive decision networks.

Gradual replacement, not sudden migration

A common mistake is attempting to replace LLMs completely at once. In practice, transition works best in phases:

- Start by adding SLMs for low-risk tasks

- Move repetitive workflows away from LLMs

- Introduce routing between models

- Optimize performance and cost

- Expand SLM usage gradually across the system

This step-by-step approach reduces system risk while improving performance incrementally.

Fine-tuning SLMs for domain-specific roles

To replace LLM functions effectively, SLMs must be carefully trained or fine-tuned.

This involves:

- Using domain-specific datasets

- Training on structured outputs

- Reinforcing rule-based behaviors

- Aligning models with workflow requirements

Well-tuned SLMs often outperform LLMs in narrow domains because they are optimized for precision, not generality.

Infrastructure and deployment adjustments

Transitioning also requires changes in system architecture.

Organizations need to:

- Deploy lightweight inference servers for SLMs

- Optimize APIs for low-latency responses

- Design caching mechanisms for repeated tasks

- Support parallel execution of multiple small models

These changes ensure SLMs can operate efficiently at scale without bottlenecks.

Key challenges during migration

Despite its benefits, transitioning is not without challenges:

- Identifying correct task boundaries

- Maintaining system consistency during migration

- Ensuring fallback reliability when SLMs fail

- Managing multiple models in production environments

These challenges require careful system design and continuous monitoring.

Summary

Transitioning from LLMs to SLMs is not about replacement—it is about restructuring intelligence across a system.

By combining hybrid architectures, intelligent routing, and task-level decomposition, organizations can gradually shift toward more efficient Agentic AI systems. The result is a setup where large models handle complexity, while small models handle scale, speed, and operational efficiency.

9. Challenges and When SLMs May Not Be Ideal

Small Language Models (SLMs) offer clear advantages in speed, cost, and scalability, but they are not a universal solution. Like any technology, they come with limitations. In some scenarios, relying on SLMs alone can reduce system quality or create new engineering problems.

Understanding these boundaries is important for designing reliable Agentic AI systems.

Limited deep reasoning capability

SLMs are optimized for efficiency, not complex reasoning. They perform well in structured and repetitive tasks, but struggle when:

- Multi-step logical reasoning is required

- Problems are highly ambiguous or abstract

- Long chains of dependencies must be tracked

In such cases, larger models still outperform them because they retain more world knowledge and reasoning capacity.

This is why hybrid systems are common—SLMs handle execution, while LLMs handle deep thinking.

Reduced general knowledge coverage

Because SLMs are smaller, they naturally store less information. This leads to limitations in:

- Broad factual knowledge

- Rare or niche domain understanding

- Cross-domain reasoning

If a task requires wide contextual awareness or open-ended knowledge, SLMs may produce incomplete or less accurate results.

This makes them less suitable for general-purpose AI assistants.

Performance degradation in complex tool orchestration

Agentic systems often require multiple tools, APIs, and workflows to work together.

SLMs can struggle when:

- Too many tools must be coordinated

- Decisions depend on long contextual history

- Multi-agent communication becomes complex

In these situations, smaller models may lose track of context or make incorrect routing decisions.

Sensitivity to training quality

SLMs depend heavily on the quality of their training or fine-tuning data.

If the dataset is:

- Narrow

- Biased

- Poorly structured

The model will reflect those weaknesses more strongly than larger models. LLMs often compensate for noise in data due to their broader training scale, while SLMs are more sensitive to it.

Difficulty handling open-ended creativity

Tasks that require creativity, flexible thinking, or unpredictable output generation are not ideal for SLMs.

Examples include:

- Content ideation

- Story generation

- Strategic brainstorming

- Complex problem reframing

In these areas, LLMs still provide stronger performance because they are better at exploring diverse possibilities.

Risk of over-fragmentation in system design

One architectural challenge in SLM-based systems is over-decomposition.

When too many small models are introduced:

- System complexity increases

- Debugging becomes harder

- Coordination overhead grows

- Latency can accumulate across steps

Instead of simplifying systems, excessive use of SLMs can sometimes make them more difficult to manage.

When LLMs are still the better choice

Despite the rise of SLMs, there are clear cases where large models remain more suitable:

- Complex reasoning tasks

- Research and analysis requiring broad context

- High-stakes decision support

- Open-ended conversational systems

- Creative and generative applications

In these cases, the depth and flexibility of LLMs outweigh the efficiency benefits of smaller models.

Summary

SLMs are powerful, but not universally applicable. Their strengths lie in structured, repetitive, and well-defined tasks, while their weaknesses appear in complex reasoning and open-ended problems.

The most effective Agentic AI systems recognize this balance. They do not replace LLMs entirely—they combine both models strategically, using each where it performs best.

10. The Future of Agentic AI with Small Language Models

The future of Agentic AI is not centered around a single breakthrough model. It is moving toward systems of coordinated intelligence, where multiple specialized models work together to perform complex tasks. In this new structure, Small Language Models (SLMs) are expected to play a foundational role.

Instead of competing directly with Large Language Models, SLMs are becoming the operational backbone of agentic systems.

From monolithic models to modular intelligence

The early AI era focused on building one powerful model that could handle everything. That approach is slowly being replaced.

The next phase is modular:

- Different models handle different tasks

- Systems are built like interconnected components

- Intelligence is distributed instead of centralized

In this structure, SLMs act as the fast execution layer, handling decisions, routing, and structured outputs, while larger models are used only when necessary.

Rise of autonomous multi-agent ecosystems

Agentic AI is evolving from single agents into multi-agent systems.

In the future, we will see:

- Teams of AI agents working together

- Specialized SLMs handling micro-tasks

- Coordination layers managing communication between agents

This creates a digital workforce where each model has a defined role, similar to departments in an organization.

SLMs are ideal for this environment because they are:

- Fast

- Cheap to run

- Easy to replicate and scale

Efficiency becomes the new performance metric

For years, AI progress was measured by model size and benchmark scores. That is changing.

The new metrics include:

- Cost per task

- Latency per decision

- Energy efficiency

- Reliability in production systems

SLMs are naturally aligned with these priorities, which is why their adoption is expected to grow rapidly in enterprise AI.

Smarter routing and adaptive AI systems

Future Agentic AI systems will not rely on fixed pipelines. Instead, they will be adaptive and dynamic.

They will decide in real time:

- Which model to use

- How much reasoning is needed

- When to escalate to larger models

- When to execute actions directly

SLMs will serve as the first layer of intelligence in this decision chain, making systems faster and more efficient.

Expansion into edge and distributed environments

One of the most important future trends is deployment outside centralized cloud systems.

SLMs enable AI to run on:

- Edge devices

- Local servers

- Mobile systems

- Private enterprise infrastructure

This opens the door for:

- Offline agentic systems

- Privacy-focused AI deployments

- Real-time decision-making at the edge

This shift makes AI more accessible and secure across industries.

Democratization of agent development

As SLMs become more efficient and accessible, building AI agents will no longer be limited to large organizations.

We will see:

- Smaller companies deploying custom agents

- Developers building domain-specific AI tools

- Open-source agent ecosystems expanding rapidly

This democratization will accelerate innovation and reduce dependency on large AI platforms.

Final outlook

The future of Agentic AI is not about choosing between small or large models—it is about intelligent orchestration.

SLMs will handle speed, structure, and scalability. LLMs will handle depth and complexity. Together, they will form layered systems that are more efficient and practical than anything built on a single model.

In this future, intelligence is no longer defined by size. It is defined by how effectively systems coordinate multiple levels of AI to solve real-world problems.

Conclusion

Small Language Models are reshaping how Agentic AI systems are built and deployed. Instead of relying on massive, resource-heavy models, the industry is moving toward smaller, more efficient components that are easier to control, scale, and integrate. This shift is not about replacing large models entirely, but about using the right model for the right task. SLMs bring speed, cost efficiency, and reliability—three qualities that are essential for real-world AI agents. As agentic systems continue to evolve, the future will likely be defined by smart combinations of small models working together, rather than a single all-powerful system.